Introduction

Retrieval-augmented technology (RAG) techniques are remodeling AI by enabling giant language fashions (LLMs) to entry and combine data from exterior vector databases with no need fine-tuning. This method permits LLMs to ship correct, up-to-date responses by dynamically retrieving the most recent information, lowering computational prices, and bettering real-time decision-making.

For instance, corporations like JPMorgan Chase use RAG techniques to automate the evaluation of monetary paperwork, extracting key insights essential for funding choices. These techniques have allowed monetary giants to course of 1000’s of monetary statements, contracts, and experiences, extracting key monetary metrics and insights which can be important for funding choices. Nevertheless, a problem arises when coping with non-machine-readable codecs like scanned PDFs, which require Optical Character Recognition (OCR) for correct information extraction. With out OCR expertise, important monetary information from paperwork like S-1 filings and Okay-1 types can’t be precisely extracted and built-in, limiting the effectiveness of the RAG system in retrieving related data.

On this article, we’ll stroll you thru a step-by-step information to constructing a monetary RAG system. We’ll additionally discover efficient options by Nanonets for dealing with monetary paperwork which can be machine-unreadable, guaranteeing that your system can course of all related information effectively.

Understanding RAG Programs

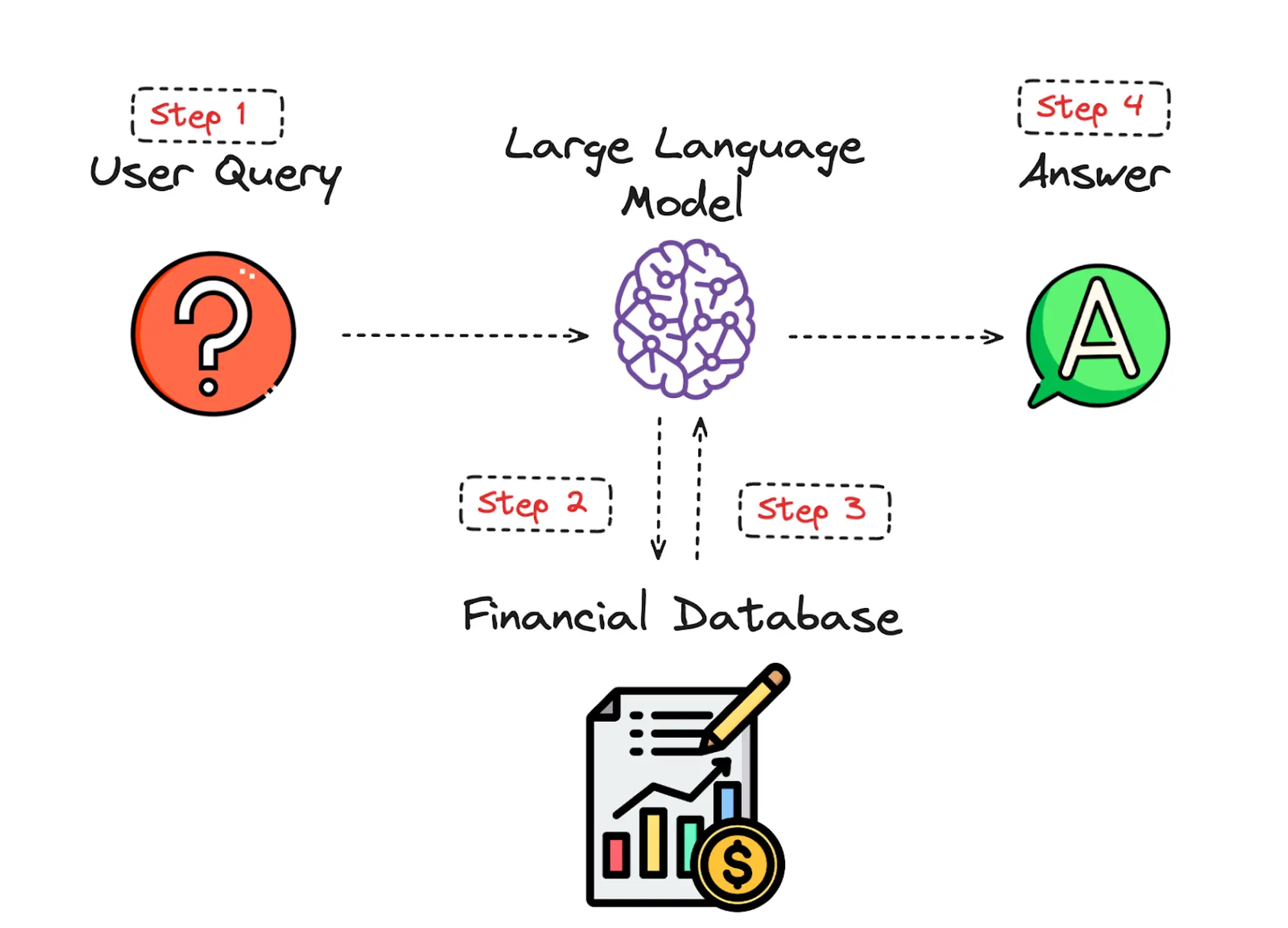

Constructing a Retrieval-Augmented Era (RAG) system includes a number of key elements that work collectively to reinforce the system’s capability to generate related and contextually correct responses by retrieving and using exterior data. To higher perceive how RAG techniques function, let’s shortly evaluation the 4 predominant steps, ranging from when the consumer enters their question to when the mannequin returns its reply.

1. Person Enters Question

The consumer inputs a question by a consumer interface, reminiscent of an internet kind, chat window, or voice command. The system processes this enter, guaranteeing it’s in an acceptable format for additional evaluation. This would possibly contain fundamental textual content preprocessing like normalization or tokenization.

The question is handed to the Massive Language Mannequin (LLM), reminiscent of Llama 3, which interprets the question and identifies key ideas and phrases. The LLM assesses the context and necessities of the question to formulate what data must be retrieved from the database.

2. LLM Retrieves Information from the Vector Database

The LLM constructs a search question primarily based on its understanding and sends it to a vector database reminiscent of FAISS, which is a library developed by Fb AI that gives environment friendly similarity search and clustering of dense vectors, and is broadly used for duties like nearest neighbor search in giant datasets.

The embeddings which is the numerical representations of the textual information that’s used so as to seize the semantic which means of every phrase within the monetary dataset, are saved in a vector database, a system that indexes these embeddings right into a high-dimensional area. Transferring on, a similarity search is carried out which is the method of discovering essentially the most related objects primarily based on their vector representations, permitting us to extract information from essentially the most related paperwork.

The database returns a listing of the highest paperwork or information snippets which can be semantically just like the question.

3. Up-to-date RAG Information is Returned to the LLM

The LLM receives the retrieved paperwork or information snippets from the database. This data serves because the context or background information that the LLM makes use of to generate a complete response.

The LLM integrates this retrieved information into its response-generation course of, guaranteeing that essentially the most present and related data is taken into account.

4. LLM Replies Utilizing the New Identified Information and Sends it to the Person

Utilizing each the unique question and the retrieved information, the LLM generates an in depth and coherent response. This response is crafted to handle the consumer’s question precisely, leveraging the up-to-date data offered by the retrieval course of.

The system delivers the response again to the consumer by the identical interface they used to enter their question.

Step-by-Step Tutorial: Constructing the RAG App

Tips on how to Construct Your Personal Rag Workflows?

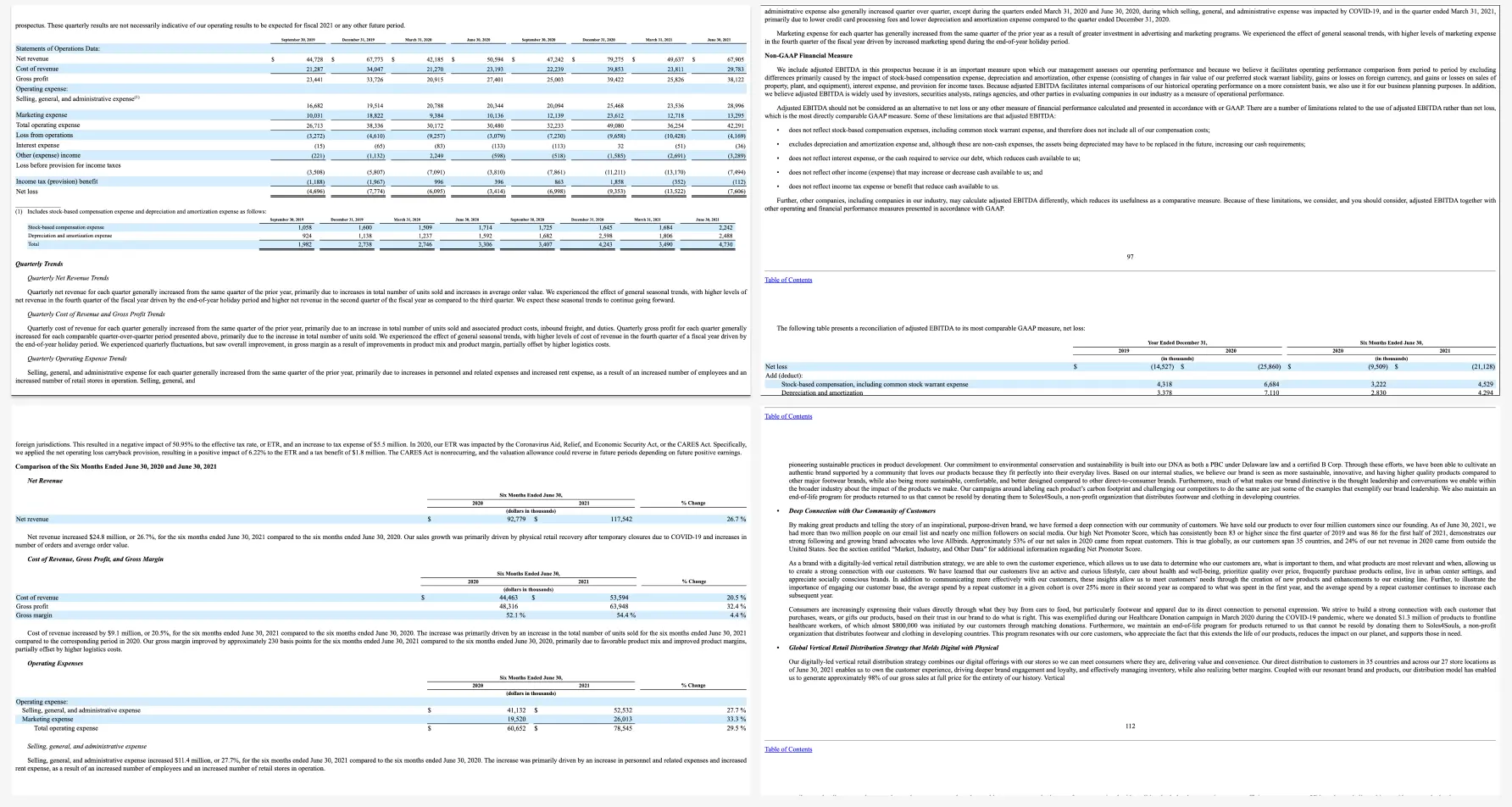

As we acknowledged earlier, RAG techniques are extremely useful within the monetary sector for superior information retrieval and evaluation. On this instance, we’re going to analyze an organization often known as Allbirds. We’re going to remodel the Allbirds S-1 doc into phrase embeddings—numerical values that machine studying fashions can course of—we allow the RAG system to interpret and extract related data from the doc successfully.

This setup permits us to ask Llama LLM fashions questions that they have not been particularly skilled on, with the solutions being sourced from the vector database. This technique leverages the semantic understanding of the embedded S-1 content material, offering correct and contextually related responses, thus enhancing monetary information evaluation and decision-making capabilities.

For our instance, we’re going to make the most of S-1 monetary paperwork which include important information about an organization’s monetary well being and operations. These paperwork are wealthy in each structured information, reminiscent of monetary tables, and unstructured information, reminiscent of narrative descriptions of enterprise operations, danger elements, and administration’s dialogue and evaluation. This combine of knowledge varieties makes S-1 filings splendid candidates for integrating them into RAG techniques. Having mentioned that, let’s begin with our code.

Step 1: Putting in the Mandatory Packages

To begin with, we’re going to be sure that all mandatory libraries and packages are put in. These libraries embrace instruments for information manipulation (numpy, pandas), machine studying (sci-kit-learn), textual content processing (langchain, tiktoken), vector databases (faiss-cpu), transformers (transformers, torch), and embeddings (sentence-transformers).

!pip set up numpy pandas scikit-learn

!pip set up langchain tiktoken faiss-cpu transformers pandas torch openai

!pip set up sentence-transformers

!pip set up -U langchain-community

!pip set up beautifulsoup4

!pip set up -U langchain-huggingface

Step 2: Importing Libraries and Initialize Fashions

On this part, we will probably be importing the mandatory libraries for information dealing with, machine studying, and pure language processing.

As an illustration, the Hugging Face Transformers library gives us with highly effective instruments for working with LLMs like Llama 3. It permits us to simply load pre-trained fashions and tokenizers, and to create pipelines for numerous duties like textual content technology. Hugging Face’s flexibility and extensive assist for various fashions make it a go-to selection for NLP duties. The utilization of such library is determined by the mannequin at hand,you possibly can make the most of any library that gives a functioning LLM.

One other necessary library is FAISS. Which is a extremely environment friendly library for similarity search and clustering of dense vectors. It permits the RAG system to carry out fast searches over giant datasets, which is crucial for real-time data retrieval. Related libraries that may carry out the identical process do embrace Pinecone.

Different libraries which can be used all through the code embrace such pandas and numpy which permit for environment friendly information manipulation and numerical operations, that are important in processing and analyzing giant datasets.

Be aware: RAG techniques supply a substantial amount of flexibility, permitting you to tailor them to your particular wants. Whether or not you are working with a specific LLM, dealing with numerous information codecs, or selecting a selected vector database, you possibly can choose and customise libraries to finest fit your targets. This adaptability ensures that your RAG system could be optimized for the duty at hand, delivering extra correct and environment friendly outcomes.

import os

import pandas as pd

import numpy as np

import faiss

from bs4 import BeautifulSoup

from langchain.vectorstores import FAISS

from transformers import AutoTokenizer, AutoModelForCausalLM, AutoConfig, pipeline

import torch

from langchain.llms import HuggingFacePipeline

from sentence_transformers import SentenceTransformer

from transformers import AutoModelForCausalLM, AutoTokenizer

Step 3: Defining Our Llama Mannequin

Outline the mannequin checkpoint path to your Llama 3 mannequin.

model_checkpoint="/kaggle/enter/llama-3/transformers/8b-hf/1"

Load the unique configuration instantly from the checkpoint.

model_config = AutoConfig.from_pretrained(model_checkpoint, trust_remote_code=True)

Allow gradient checkpointing to avoid wasting reminiscence.

model_config.gradient_checkpointing = True

Load the mannequin with the adjusted configuration.

mannequin = AutoModelForCausalLM.from_pretrained(

model_checkpoint,

config=model_config,

trust_remote_code=True,

device_map='auto'

)

Load the tokenizer.

tokenizer = AutoTokenizer.from_pretrained(model_checkpoint)

The above part initializes the Llama 3 mannequin and its tokenizer. It masses the mannequin configuration, adjusts the rope_scaling parameters to make sure they’re accurately formatted, after which masses the mannequin and tokenizer.

Transferring on, we’ll create a textual content technology pipeline with blended precision (fp16).

text_generation_pipeline = pipeline(

"text-generation",

mannequin=mannequin,

tokenizer=tokenizer,

torch_dtype=torch.float16,

max_length=256, # Additional scale back the max size to avoid wasting reminiscence

device_map="auto",

truncation=True # Guarantee sequences are truncated to max_length

)

Initialize Hugging Face LLM pipeline.

llm = HuggingFacePipeline(pipeline=text_generation_pipeline)

Confirm the setup with a immediate.

immediate = """

consumer

Hey it's good to fulfill you!

assistant

"""

output = llm(immediate)

print(output)

This creates a textual content technology pipeline utilizing the Llama 3 mannequin and verifies its performance by producing a easy response to a greeting immediate.

Step 4: Defining the Helper Features

load_and_process_html(file_path) Perform

The load_and_process_html perform is accountable for loading the HTML content material of monetary paperwork and extracting the related textual content from them. Since monetary paperwork could include a mixture of structured and unstructured information, this perform tries to extract textual content from numerous HTML tags like <p>, <div>, and <span>. By doing so, it ensures that each one the vital data embedded inside completely different components of the doc is captured.

With out this perform, it might be difficult to effectively parse and extract significant content material from HTML paperwork, particularly given their complexity. The perform additionally incorporates debugging steps to confirm that the right content material is being extracted, making it simpler to troubleshoot points with information extraction.

def load_and_process_html(file_path):

with open(file_path, 'r', encoding='latin-1') as file:

raw_html = file.learn()

# Debugging: Print the start of the uncooked HTML content material

print(f"Uncooked HTML content material (first 500 characters): {raw_html[:500]}")

soup = BeautifulSoup(raw_html, 'html.parser')

# Attempt completely different tags if <p> would not exist

texts = [p.get_text() for p in soup.find_all('p')]

# If no <p> tags discovered, strive different tags like <div>

if not texts:

texts = [div.get_text() for div in soup.find_all('div')]

# If nonetheless no texts discovered, strive <span> or print extra of the HTML content material

if not texts:

texts = [span.get_text() for span in soup.find_all('span')]

# Ultimate debugging print to make sure texts are populated

print(f"Pattern texts after parsing: {texts[:5]}")

return texts

create_and_store_embeddings(texts) Perform

The create_and_store_embeddings perform converts the extracted texts into embeddings, that are numerical representations of the textual content. These embeddings are important as a result of they permit the RAG system to grasp and course of the textual content material semantically. The embeddings are then saved in a vector database utilizing FAISS, enabling environment friendly similarity search.

def create_and_store_embeddings(texts):

mannequin = SentenceTransformer('all-MiniLM-L6-v2')

if not texts:

increase ValueError("The texts listing is empty. Make sure the HTML file is accurately parsed and comprises textual content tags.")

vectors = mannequin.encode(texts, convert_to_tensor=True)

vectors = vectors.cpu().detach().numpy() # Convert tensor to numpy array

# Debugging: Print shapes to make sure they're appropriate

print(f"Vectors form: {vectors.form}")

# Guarantee that there's no less than one vector and it has the right dimensions

if vectors.form[0] == 0 or len(vectors.form) != 2:

increase ValueError("The vectors array is empty or has incorrect dimensions.")

index = faiss.IndexFlatL2(vectors.form[1]) # Initialize FAISS index

index.add(vectors) # Add vectors to the index

return index, vectors, texts

retrieve_and_generate(question, index, texts, vectors, ok=1) Perform

The retrieve perform handles the core retrieval strategy of the RAG system. It takes a consumer’s question, converts it into an embedding, after which performs a similarity search throughout the vector database to seek out essentially the most related texts. The perform returns the highest ok most related paperwork, which the LLM will use to generate a response. As an illustration, in our instance we will probably be returning the highest 5 related paperwork.

def retrieve_and_generate(question, index, texts, vectors, ok=1):

torch.cuda.empty_cache() # Clear the cache

mannequin = SentenceTransformer('all-MiniLM-L6-v2')

query_vector = mannequin.encode([query], convert_to_tensor=True)

query_vector = query_vector.cpu().detach().numpy()

# Debugging: Print shapes to make sure they're appropriate

print(f"Question vector form: {query_vector.form}")

if query_vector.form[1] != vectors.form[1]:

increase ValueError("Question vector dimension doesn't match the index vectors dimension.")

D, I = index.search(query_vector, ok)

retrieved_texts = [texts[i] for i in I[0]] # Guarantee that is appropriate

# Restrict the variety of retrieved texts to keep away from overwhelming the mannequin

context = " ".be part of(retrieved_texts[:2]) # Use solely the primary 2 retrieved texts

# Create a immediate utilizing the context and the unique question

immediate = f"Based mostly on the next context:n{context}nnAnswer the query: {question}nnAnswer:. If you do not know the reply, return that you just can not know."

# Generate the reply utilizing the LLM

generated_response = llm(immediate)

# Return the generated response

return generated_response.strip()

Step 5: Loading and Processing the Information

In the case of loading and processing information, there are numerous strategies relying on the information sort and format. On this tutorial, we give attention to processing HTML recordsdata containing monetary paperwork. We use the load_and_process_html perform that we outlined above to learn the HTML content material and extract the textual content, which is then remodeled into embeddings for environment friendly search and retrieval. Yow will discover the hyperlink to the information we’re utilizing right here.

# Load and course of the HTML file

file_path = "/kaggle/enter/s1-allbirds-document/S-1-allbirds-documents.htm"

texts = load_and_process_html(file_path)

# Create and retailer embeddings within the vector retailer

vector_store, vectors, texts = create_and_store_embeddings(texts)

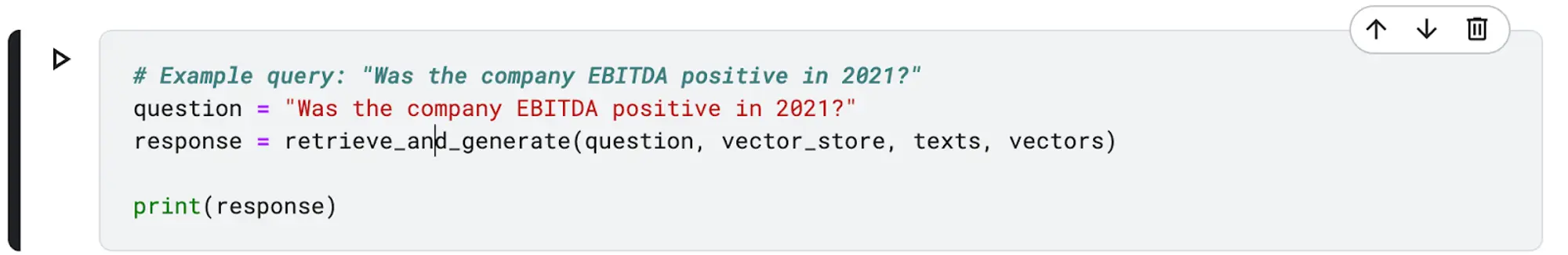

Step 6: Testing Our Mannequin

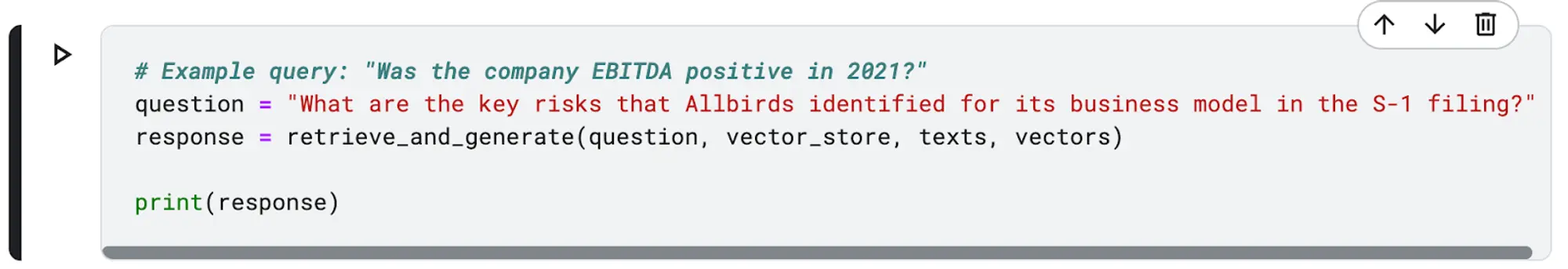

On this part, we’re going to take a look at our RAG system by utilizing the next instance queries:

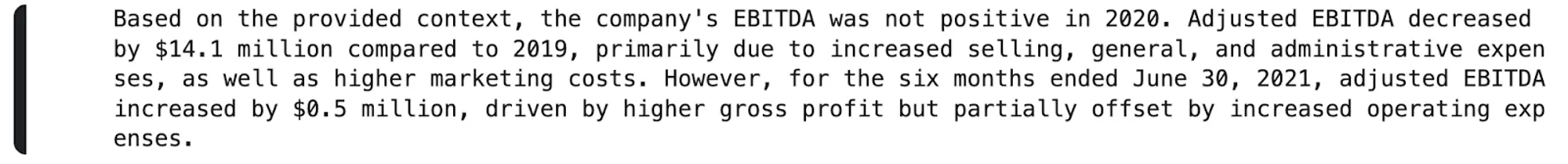

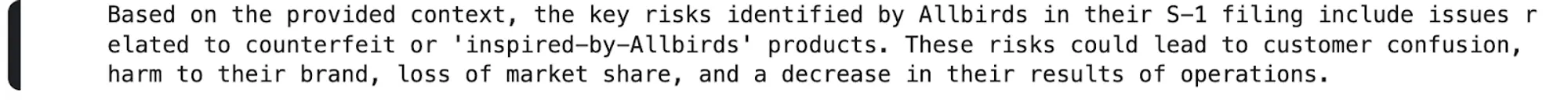

As proven above, the llama 3 mannequin takes within the context retrieved by our retrieval system and utilizing it generates an updated and a extra educated reply to our question.

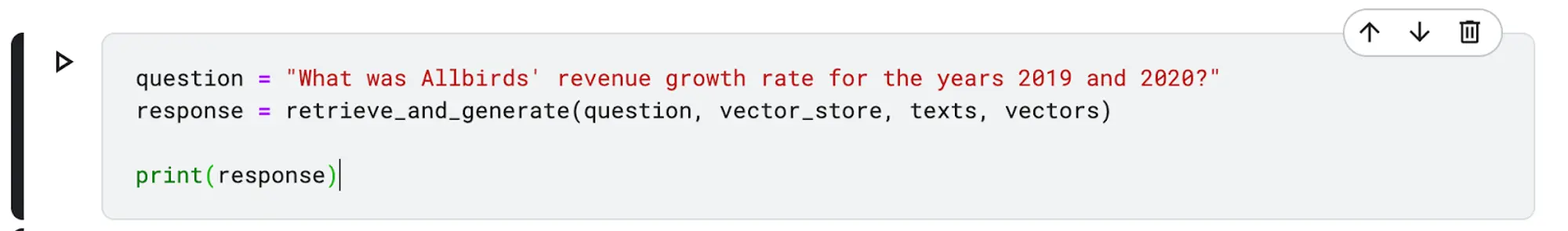

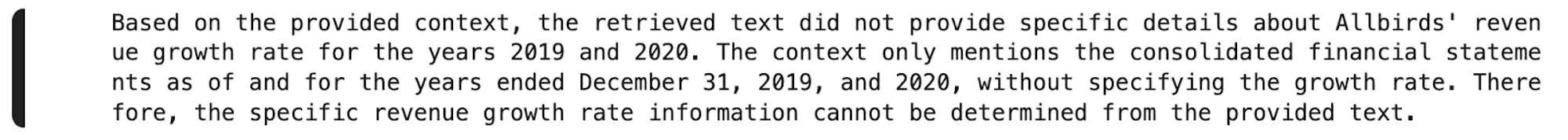

Above is one other question that the mode was able to replying to utilizing extra context from our vector database.

Lastly, once we requested our mannequin the above given question, the mannequin replied that no particular particulars the place given that may help in it answering the given question. Yow will discover the hyperlink to the pocket book to your reference right here.

What’s OCR?

Monetary paperwork like S-1 filings, Okay-1 types, and financial institution statements include important information about an organization’s monetary well being and operations. Information extraction from such paperwork is complicated because of the mixture of structured and unstructured content material, reminiscent of tables and narrative textual content. In circumstances the place S-1 and Okay-1 paperwork are in picture or non-readable PDF file codecs, OCR is crucial. It permits the conversion of those codecs into textual content that machines can course of, making it doable to combine them into RAG techniques. This ensures that each one related data, whether or not structured or unstructured, could be precisely extracted by using these AI and Machine studying algorithms.

How Nanonets Can Be Used to Improve RAG Programs

Nanonets is a strong AI-driven platform that not solely provides superior OCR options but in addition permits the creation of customized information extraction fashions and RAG (Retrieval-Augmented Era) use circumstances tailor-made to your particular wants. Whether or not coping with complicated monetary paperwork, authorized contracts, or another intricate datasets, Nanonets excels at processing different layouts with excessive accuracy.

By integrating Nanonets into your RAG system, you possibly can harness its superior information extraction capabilities to transform giant volumes of knowledge into machine-readable codecs like Excel and CSV. This ensures your RAG system has entry to essentially the most correct and up-to-date data, considerably enhancing its capability to generate exact, contextually related responses.

Past simply information extraction, Nanonets also can construct full RAG-based options to your group. With the power to develop tailor-made functions, Nanonets empowers you to enter queries and obtain exact outputs derived from the particular information you’ve fed into the system. This custom-made method streamlines workflows, automates information processing, and permits your RAG system to ship extremely related insights and solutions, all backed by the intensive capabilities of Nanonets’ AI expertise.

The Takeaways

By now, it’s best to have a strong understanding of the best way to construct a Retrieval-Augmented Era (RAG) system for monetary paperwork utilizing the Llama 3 mannequin. This tutorial demonstrated the best way to remodel an S-1 monetary doc into phrase embeddings and use them to generate correct and contextually related responses to complicated queries.

Now that you’ve got discovered the fundamentals of constructing a RAG system for monetary paperwork, it is time to put your information into follow. Begin by constructing your individual RAG techniques and think about using OCR software program options just like the Nanonets API to your doc processing wants. By leveraging these highly effective instruments, you possibly can extract information related to your use circumstances and improve your evaluation capabilities, supporting higher decision-making and detailed monetary evaluation within the monetary sector.